Music surrounds a modern person, you can hear it everywhere. At the same time, music can make you famous almost overnight. In this article, we’ll share with you several music production tips from professional engineers to help you produce better songs.

These music production tips can help musicians working in different genres: from hip-hop and electronic music to all kinds of rock and will be relevant for each of them. We’ll highlight main mistakes that almost all novice musicians make and this will help you avoid them in your music production.

Learn by analyzing a lot of music

For aspiring artists, the fundamental advice on making music should be considered one of the basics: analyzing a large amount of records. Listen to different genres, analyze different melodies, focus on artists’ performances, and watch their videos. This is an important part of your education.

Since not everyone has the opportunity to graduate from Berkeley, not everyone is lucky enough to have a good mentor. But remember that the best tutor in our life will always be the music itself. Studying music even at home, you will learn tips on arranging music, understand how to write harmonies, melodies and how good material should sound. This will instill in you a good musical taste and will help you to make your own music. If you are an expert in this analysis, your skills will help you become a good producer in the future.

Become an expert in music

Talented producers are experts in the industry. They have huge libraries of various musical styles and a rich vinyl collection. They know old and new euphony equally well, due to the fact that they constantly improve their musical ear and trust your ears when listening and creating something unique and unusual.

Understanding how music is created, how it sounds, what is good and what is bad, what has gained popularity and what has not, will greatly help you in creating your own songs.

More than a university

Becoming an expert in music production takes years and learning never stops in this case because the industry is constantly developing. New ideas appear in the music world all the time.

You will graduate from university, but music will continue to teach you throughout your life. If you are ready for this endless education, your products will never lose relevance, you will always follow trends and make high-quality tracks that will be played and sang by millions.

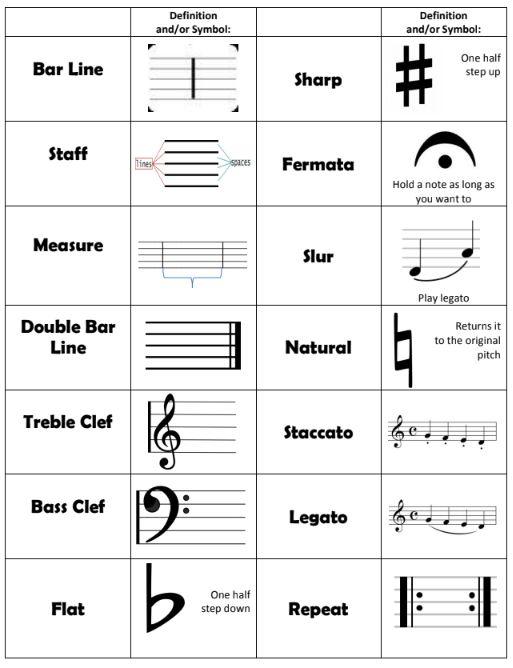

Learn music theory

Almost every experienced music producer, and generally any person who is at least a little bit connected to the process of creating music, will agree that an in-depth study of theory directly affects the quality of the product. However, not everyone listens to this advice. Beginner artists often see music theory as boring and useless information, however, this perception is wrong and if you refuse to learn music theory it will hinder your progress.

Difficult but necessary

Studying theory is one of the most difficult, but useful stages for your education in music. Fundamental understanding of basic principles of notes, chords, and intervals will help you work with your DAW, find a common language with producers, masters of mastering and mixing, and with other artists.

It is quite difficult to try to convey your idea to another musician without using professional music language. Don’t get me wrong, I’m not talking about studying day and night to learn everything in music theory, but you must understand the basics and be able to use musical terms. This will give you a great advantage when communicating with other professionals in the field.

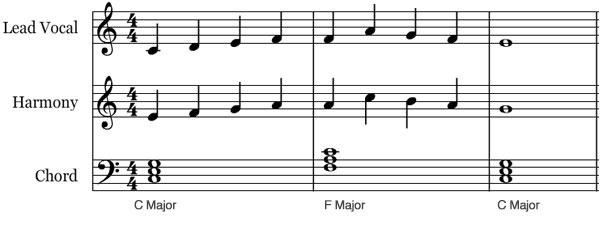

Music theory is the songwriter’s best friend

Many people completely underestimate the study of the basics of the theory, however, specialists in this field who graduated from, for example, Berkeley, have all that’s needed for creating unique musical projects in any genre. In their creations people can see their grasp of music theory, including chord progressions, harmony rules, and other things.

All songs have certain rules according to which they are created, and knowing these rules, it becomes much easier to compose tracks. And the final record sounds good, high-quality, and pleasant to the listener.

Record everything around you

The modern life of a novice musician is full of advice from music producers, masters of mixing and mastering, who recommend recording everything that surrounds you in everyday life. The audio materials can be recorded either on special recorder or on a regular phone.

It’s a good idea to make a habit of taking small walks with a recorder, trying to catch interesting sounds and melodies around you. This idea is useful because for a novice producer it’s not just listening to a recording in a room after a walk, but a point to learn how to work in a DAW and cut them into samples.

This stage is considered extremely interesting for many because when there is a full focus by paying attention to each sound, you begin to trust your ears better and notice various, even the most insignificant, flaws in the recording that you have made.

Besides the fact that this advice is a good source of inspiration and new sounds. The unusual sounds of a flying bird, the laughter of a passer by can be well sampled and used in the tracks.

Organize your studio

Absolutely in any specialty, one of the main conditions for productivity and quality work is the correct organization of your workplace. Speaking of music production, there are also some tips for future producers and stars for their future studio.

1. Your studio must be conveniently organized

One of the most important tips to listen to is the availability of comfort and easy accessibility to all objects in the room.

2. Have another place to eat, sleep and dream

The workplace is not only the presence of a chair and a table, especially for a musician, so you should not be too mediocre, and put all the necessary equipment in the studio where you sleep or eat.

Making music can be inefficient because the workspace should encourage you to make something new in tracks, listen to your music more carefully, if necessary, have additional space for instruments, and not tend to sleep or become routine.

3. Expensive doesn’t beat smart

It is not necessary to invest a lot of money buying all the most expensive things, because it will not be difficult to create your own studio room and money, with the right understanding and distribution of income.

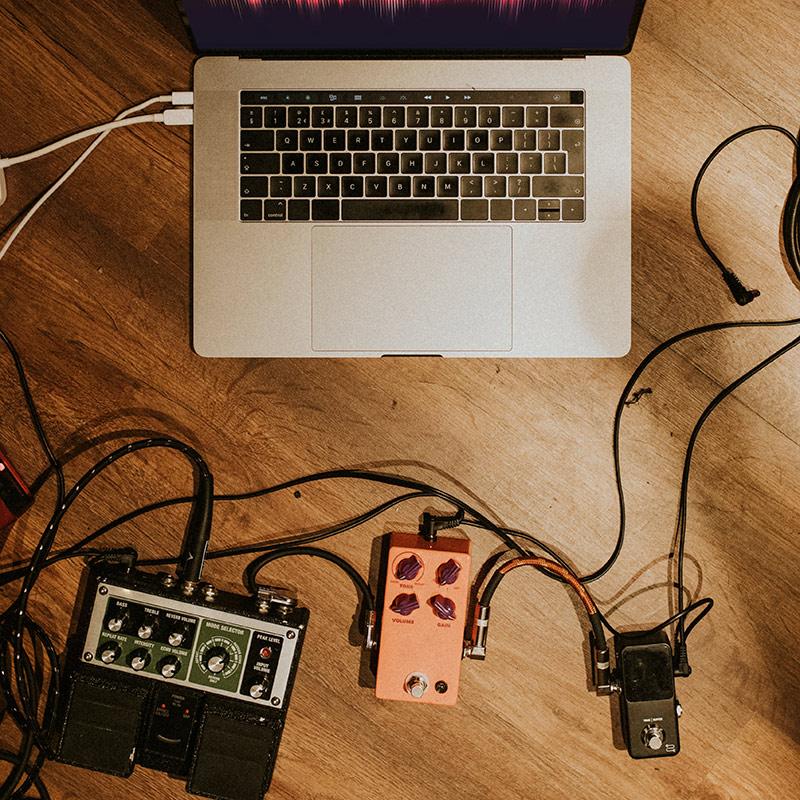

Use minimum amount of equipment

The most erroneous music production tip that is imposed on many aspiring artists is to buy absolutely all the equipment at the initial stages, but this is nothing more than a myth. The creation of future tracks will not depend in any way on a large amount of equipment that can be advertised in different articles on the Internet.

To get into the Billboard charts and even receive many awards, sometimes only a laptop with headphones is enough! In order to make music at the initial stages, you will only need the following:

- good sound card

- open-back headphones, so that when working in them there is not much strain on the ears

- studio monitors with speakers for high and low frequencies

- a midi keyboard to be able to record various instruments

The availability of this equipment at a home studio has much more advantages than the same studios, for which musicians will have to travel from one place to another. Spending much time travelling is useless when you can equip one room and make a studio out of it. Your studio, which will have everything you need, and if you want to record something, edit or fix the recording in your DAW, everything you need will be at hand.

Begin with a concrete vision

When you receive an order for a certain job, the most basic tip at this stage will be – never start the production process until the track or arrangement has formed in your head.

When you receive an order for a certain job, the most basic tip at this stage will be – never start the production process until the track or arrangement has formed in your head.

1. Start with a general understanding

A master of his craft will never start work without a general understanding of what a composition should be like, what tools should be used, in what genre it will be and how best to record it. All this make sure the listener will like the composition and respond to it.

2. Form a clear vision of the project

If a producer starts working without a certain vision, then in the process they can lose the main idea of the track. The producer will lose the initial concept, which may not come back to the musician’s mind after wondering off of it.

Loss of concentration is a fairly common practice among producers who, due to not following this advice, remain at the same level, without the possibility of further development.

3. Take your time

If a music producer needs time to record audio and make an interesting project, let him use it! As practice shows, the muse does not come instantly and in order to come up with a unique idea that will leave its trails on musical history, it takes time and effort to clearly think through the structure of the track, harmony, and general concept in your head.

Arrange a rest while working as a music producer

One of the most interesting and fascinating tips is to have fasting days. This term is most often used in nutrition when you allow your body to rest for one day from heavy food, however, using an expression for music producers, you can explain this as permission to rest your head from creating audio materials or projects.

How to organize the resting day

This does not mean that the producer completely arranges for himself a day off and suspends himself from work.

The purpose is not to work for the sake of work, but to satisfy your own thoughts. Find samples that still didn’t get enough of your time and attention. Learn new material to improve your skills a little, create drafts.

This time, you are not doing work headlong, but spending time in your pleasure. Many will be surprised, but returning to work the next day, music producers will be grateful to themselves that yesterday they created a few blanks that could fly out of their heads in a day, and now be ideas for a new project.

This practice has been around for a long time and many producers have a day once a week when they simply generate new material, come up with samples to use it a little later.

Use reference tracks

Reference tracks are the very projects that many people look up to when mixing. Of course, describing the ideal reference tracks, the first to come to mind are famous hits, tracks mixed by professional audio engineers, the quality of which you will not have to doubt.

How reference tracks affect you

The music production tips for a novice music producer will be a concrete understanding of exactly how references affect your own ideas.

- First of all, this is excellent hearing training, for a full understanding of the high-quality music and depth of the sound picture,

- Secondly, it will greatly simplify the work at the stage of mixing and mastering. In the process of work, you can always turn on the reference and compare the sound by inserting it on a separate track.

The ability to analyze your own projects, with those that were declared as references, allows you to learn to listen to the difference between sounds on different devices.

If you have a reference track at hand, which you can rely on when creating your own, having a ready-made idea in your head, there is a high probability that you will not wander off the path.

Be really creative

The modern music industry is always in demand for something new and unusual for its listeners. Being inventive is one of the most trivial music production tips, but after a while, many people completely forget about it.

Working routine kills creativity

The producer begins to immerse themselves so much in the project that they turn into office plankton, forgetting about the creative component. They simply stamp the flare, and do not create unique audio material.

To create a project, sometimes it’s worth deviating from the standard rules and doing something unique.

Music production is constantly evolving

Music producers may have a period of using certain templates that they have used for a long time, not wanting to develop and learn something new.

As it was already written earlier, music industry is constantly being improved and the template that the master found will be irrelevant in a couple of years.

It is a good idea to always be in search of a new project, record it in a different way and watch videos that are freely available.

Don’t throw your crazy ideas away

On YouTube, there is a video where someone decided to actually “record with a potato,” plugged a guitar into a potato, and then to the amplifier and managed to receive an unusual sound. The point is, if you have an idea, and it might sound crazy, but when you have a day off, a day to just doodle and experiment, free from mandatory tasks, you may actually implement these ideas, create something new that’s worth attention.

After all, if it wasn’t for the creation of new ideas, we wouldn’t have such diversity of genres, and people would still be listening only to classical music!

Don’t work on high volume level

A human ear possesses a quite complicated mechanism, and the volume at which you are listening greatly affects the perception of the sound in general, and, in particular, working with mixes. Regarding the stress it puts on the ears, the work of audio engineers is considered quite difficult.

A human ear possesses a quite complicated mechanism, and the volume at which you are listening greatly affects the perception of the sound in general, and, in particular, working with mixes. Regarding the stress it puts on the ears, the work of audio engineers is considered quite difficult.

If you don’t want to end your music career at an early age, the first thing you should definitely take care of is the health of your ears, so it’s worth learning to work using low volumes. The volume at which the mixes are played in a studio should always provide a pleasant hearing experience.

Can I work with high volume audio at all?

From time to time, you can increase the volume if necessary to check the balance, but it is not recommended to constantly use high volume level.

Consequences of working with loud music

Because of the high volume, not only your ears will suffer, but also your psyche, provoking the body to nervous breakdowns, causing sleep problems. That will inevitably cause a low level of productivity and creativity.

Create a habit for moderate loudness

At first, working at low volumes may seem inconvenient, unusual for you, but believe me, all the masters of audio engineering have gone through this difficulty and have created a habit to work using an average volume, not loud. Anyways, all who failed to do so, have retired earlier than they planned.

So, work on an average volume when you can and you’ll soon get used to it.

Use only high-quality samples

Using low-quality samples is the most common mistake, and every audio engineer has seen it. Low quality can destroy your track, and no one will be able to fix it.

There is a rule in music production, that mistakes always migrate to the next step of editing. And so, they all end up in the finished product, layered on top of each other.

- If you made a mistake at the stage of recording a song, the arrangement won’t fix it.

- If you used bad music in the song arrangement, the mixing engineer won’t be able to correct it

- The mastering engineer won’t fix the bad track, the mistakes will be heard in a master record. As a result, the song is spoiled from the beginning.

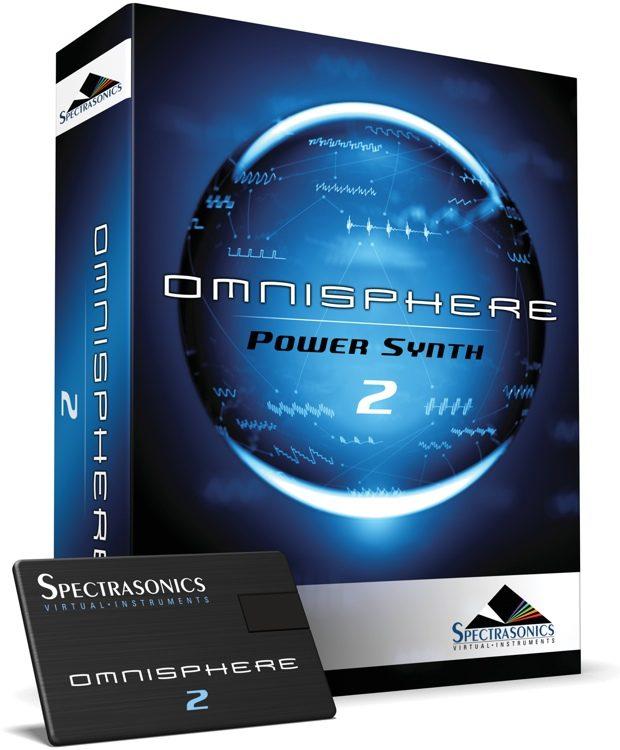

Always choose only high-quality sounds, without plug-ins. For example, a good tip would be to subscribe to Splice, it’s a great library, you’ll find hundreds of sounds created by professional producers there.

It also does not hurt to buy some good synthesizer (analog or software). The latter include Omnisphere, Spire and others. Which one you choose for your music production is not so important, but it should be a good synthesizer that will satisfy all your needs and teach you, for example, to learn new gear.

However, do not stop at one library, it is better to always be in a search mode. Take, for example, your favorite artists. You can find information, even on YouTube, about which libraries and sounds they use. People usually do not hide this information, and over time you will be able to produce a soundbank for yourself, and this will greatly help you in the future to make your own high-quality mixes.

Don’t do mixing during the production process

Using plugins in the sound selection process is the second common mistake.

Don’t use plugins to sounds

Don’t use plugins to sounds

Plugins are software blocks that are used to expand functionality (add new features to the track). For example, a person producing a beat, adds it to the arrangement, then puts an equalizer, reverb or compression on it, produces a good sound for the instrument, and in the end, it seems that the mix is suitable for listening.

However, this is nothing, but a big mistake, and by producing this, you limit the potential of the sound, making it difficult for the mixing engineer to create a good song and master class. The most important tip is the ability to produce sound in such a way that it is good without plugins.

Use analyzers while producing

Another recommendation is to try using the analyzer when choosing sounds for high-quality mixes. Connect the analyzer to the main point: this way you will see the frequency component and will be able to compare your sound or arrangement with your links.

This is a good tip for music production because sometimes monitoring cannot reproduce frequencies below 50 Hz. If you choose a kick that sounds good, when you open the analyzer, you see a huge drop in low frequencies below 50 Hz, which you could not hear before. Therefore, it is always better to check the sound of a track using an analyzer.

Use less sounds

It may sound somewhat counterintuitive but the fewer sounds you use, the better your production will turn out to be.

Kicks

We often observe that people use two or three kicks. Theoretically, this can be created, but often joining unfiltered strikes leads to an unpredictable result. A person with little experience or hearing control, a poor understanding of the features of instruments will not be able to cope with the problems that will arise, and his chances of becoming a master of his craft will be minimal.

Of course, layering is acceptable. But you can use it according to the rule: there must be two layers for one sound. For example, in the case of a kick, there can be one low-frequency sound and one high-frequency sound, it is necessary to cut off the top of one and the bottom of the other. This way for beginners can pick up a kick with a good low and then a kick with a good high, combine them, and get the sound you want.

However, novice masters are not recommended to use layering. Selecting low and high sounds and filtering will take a lot of time. And for beginners, this will create too many technical problems that will distract their attention from the mix itself and sabotage the creative process.

Snare

The same is true for the snare. Novice masters often see sessions with a lot of snares, which make the sound muddy. The best approach in snare layering is to use one snare and add some kind of overtones to it, like clap or snap, whatever you choose, in order to emphasize the drum itself. But snare layering shouldn’t turn into a never-ending adding-up of sounds in search of a good result. The tip is to select one good snare, one good clap, and that will be enough.

Hat

A novice artist can use 3 hats, and this is logical from the point of view of using a stereo field (we have a right speaker, a center speaker and a left speaker), and thus they can fit into the mix without interfering with each other.

You can put layer accent cymbals on top, for example, crashes, reverses, splashes, rides, but there shouldn’t be many of them. The trick is to try to stick to minimalism in the arrangement, because the fewer sounds are used, the better the track will sound in the end.

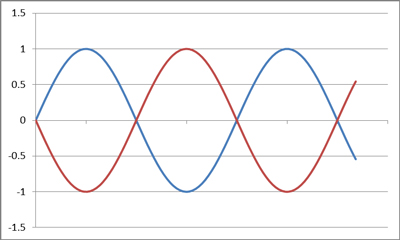

Use ONE bass

One of the most important music production tips for aspiring artists is to use only one bass.

The addition should be produced in different octaves (one in the bass in one octave, and the other in another bass octave). The fact is that low-frequency sounds are more susceptible to interference, so it is necessary to learn this topic for successful work.

What are phase issues?

For example, if we use two frequencies, and these waves go in opposite directions to each other (when one rises, the other falls), then they reduce each other. Such waves can even remove each other and add up to zero.

For example, if we use two frequencies, and these waves go in opposite directions to each other (when one rises, the other falls), then they reduce each other. Such waves can even remove each other and add up to zero.

This was just in theory, but it will not be difficult to see it in practice. Let’s say we have a speaker, an ordinary one, its membrane moves back and forth, producing sound. If two waves are fed into this one speaker and they go in opposite directions, then the speaker will simply stop because it cannot move forward and backward at the same time.

Similarly, when you add up multiple basses, a good chance is that their frequencies will be opposite waves and reduce each other. And it can not only be just static, only reducing the sound, it can be dynamic.

That is, in one moment of the track, the frequencies will amplify each other, and in some places, on certain notes, they will reduce each other making the bass quieter. The same situation can appear with kick and bass.

It’s impossible for kick and bass to sound simultaneously on one note. Because in this case, the kick will subtract or supplement some bass frequencies. It will result in a loud bass for one kick, or a loud kick, or the bass will take the bottom of the kick. This instability will bring no good to the music.

Avoid the collision

A subwoofer is one speaker, and many sounds cannot fit into it. The bottom waves are long, they take up a lot of space, ideally, one bottom wave at a time. That is, when the kick hits, you shouldn’t have a bass sound at that moment, or it should be far from the kick regarding frequencies so that the frequencies don’t add up.

There are times when the tone of the kick matches the tone of the bass, and they complement each other strongly, and then a certain note will sound louder than the rest, you need to avoid that.

There are many ways to avoid this situation, but since we are talking about the arrangement stage, the best way is to separate instruments in time (so that no bass sounds when the kick is hit).

You can cut off the bass at the moment the kick sounds, or can perform around with the parts so that the bass doesn’t play at the moment the kick is hit. This will provide you with a smooth and stable bass, and a lot of headroom in total.

Think about vocals while doing accompaniment

The main insight that I can give on the accompaniment: don’t write it in the same notes as the vocals. Because the same situation as we discussed earlier about the bass, will occur, only on a smaller level. The whole accompaniment will interfere with the vocals, and in the end, there will be a big fight for space.

Don’t use too many accompaniment elements

In the accompaniment, as in everything else, try to use a minimum of sounds, this is one of the best music production tips for beginners. You should have two or three main elements, let’s say a piano (synthesizer) and a guitar. And everything else should be built around it, to complement the melody.

Place accompaniment apart from the vocal

If you analyze large commercial projects, you may notice that usually the accompaniment is placed in one octave and the vocals are placed in the other. That is, if your accompaniment (synthesizer, guitar, piano) is at the bottom, then the vocals, ideally, should be at the top. This is not a 100% rule, but it will greatly simplify your work. Because in this case, you will not need to play with time, play some tricks with pauses, spend a lot of time on mixing and automation. It’s the simplest option.

Using this rule makes it much easier to create an arrangement in which the elements won’t compete for a place in the song. That is, the accompaniment won’t fight for a place with the vocals, and the vocals won’t be drawn behind the accompaniment. And again, try to stick to the minimalistic rule − use one main element and several additional ones. You shouldn’t put, for example, several synthesizers layering on each other, it will all mix into one big mess and in the end, will just ruin the track.

Use FXs carefully

After you’ve recorded the accompaniment, most often it comes to Fx − all sorts of uplifters, downlifters, sup sweeps, etc. The most important rule here is not to use too many of these elements, make sure that the Fx doesn’t intersect at the bottom with the kick and bass. That is, if you are using a sup sweep, make sure that there is no bass sound at that moment.

Compose backing vocals part

It can be used in different ways, in fact, this element is quite experimental, but a couple of nuances should be taken into account.

It can be used in different ways, in fact, this element is quite experimental, but a couple of nuances should be taken into account.

Mind accompaniment when composing backing vocals

When you build vocal harmonies, make sure that notes in backing vocals are not dissociated with the notes in accompaniment. Well, in general, the most common mistake that we encounter with backing vocals is that the arrangement is made very large, filled with different instruments, and there are still a lot of backing vocals put on top. And in the end, it also mixes into one mess, since large packs of backing vocals are just another instrument.

Can you compose a symphony?

A large orchestra and a large choir – all this is extremely difficult to unite without having a huge experience and a lot of skills. Such projects have been created, and quite a few of them, to be honest, but you need to understand that in order to do such large projects, you need to be very well versed in the arrangement in general.

However, if you have been listening to academic music with a lot of backing vocals for many years, you may be able to intuitively compose such an arrangement in the right way, where each element has the correct timing.

Keep your session clean

This item relates more to organizational issues. Before you start taking notes and mastering, you should put your future track in order.

Remove unnecessary clicks, make sure that all sounds and every instrument are recorded with high quality, and be sure to sign each track and do not forget to save it. When mixing and mastering, the master imports the multitrack into his session, and the correct arrangement of the signed tracks will allow him to get to work immediately.

From the point of view of professionals, all this is done so that at the next stages it takes as little time as possible to correct the shortcomings.

Approach this matter responsibly, and do not be lazy to structure your work. The order in your session will make a good impression on other people with whom you cooperate, and the work will become much more optimized.

Finish your production

Working in the creative industry always means a lot of effort request of inspiration and painstaking and diligent work on each element of a sound or instrument. However, many may face a stage of work in progress due to the loss of the above items.

Will you ever have more time?

Music production tips for beginners are complete concentration and the ability to bring the work to the final stage. Of course, at first glance, when we get stuck and are faced with such difficulties, it seems that it is better to leave a certain work until better times, rather than finish it in a bad way.

However, this is an experience, and any work must be completed, no matter in what form it will be. Making great efforts, in a year it will no longer be a problem for you to have hundreds of unfinished verses, choruses, or bridges that cannot be connected with each other in any way.

Fight the habit of postponing before it’s too late

Our brain remembers the sequence of actions and if you instill in it a sense of completeness after writing a verse, but there is no further work, you can get used to it and none of the songs can be completed.

Every music producer faces this problem and its solution should not be postponed to the back drawer. Sooner or later everyone will face this, so it’s better to solve this issue as early as possible!

Ask for feedback

Music is a special source of mood and development of emotional responsiveness for every generation of people, and if an aspiring music producer wants to create music that will leave its mark on history, the advice he should take note of is to always receive feedback.

Make friends and relatives your first critics

Do not be afraid to show your work to friends or relatives, it will be useful for you. Many people, before presenting their track, begin to justify it by talking about low sounds, problems with the melody, bad vocals or other shortcomings in which you can be unsure. You are your most important critic, and presenting your work to other people, you already understand yourself what he lacks and what, on the contrary, is unnecessary.

Receive opinion from the specialists in the industry

Make new acquaintances in the music field, spend your free time with a mastering and recording engineer, with other producers and musicians and listen to them carefully. While you are not the most recognizable person in the music industry, so take every piece of advice that the masters of your craft tell you with pleasure and study music more for your further creative path.

Keep the final word for yourself

When receiving feedback, do not forget to divide it into really high-quality criticism and one that is completely unjustified. People can’t always support the beginnings of aspiring musicians, but it’s worth remembering that music lessons, as well as, in principle, any creative activity, will not be able to please everyone in the world.

Listen to your music

There will be no secrets and complicated music production tips in this paragraph. The reality is simple – listen to your music. Analyze your track, look for all possible flaws, listen to old projects and compare with the mix you are working on now. Whether it’s pop, rock, hip-hop, electronic music, or other genre, it can be equally unique and expressive.

Studying the material that was invented from an idea and brought to life a year ago, now, due to your experience and developed skills, may not sound like you are doing your job now. From the first works, you can produce something unusual, which after a while you could forget.

Music is a creative process that not only helps others to hear a new song, new mix, a new melody and words, but also, first of all, you, to better understand yourself.

Each point of this article will help improve the quality of your work, learn new things that might not have been known before, take note of certain points and pay more attention to them. Do not be afraid to experiment in music production, because only by learning from your mistakes you can do something that will leave its daw on music history!

Check our music production services in case you need help with music production or mixing and mastering your track!